Revisiting a Robotics Competition Six Years Later

Returning to a high school robotics competition with six years of engineering education and modern control theory.

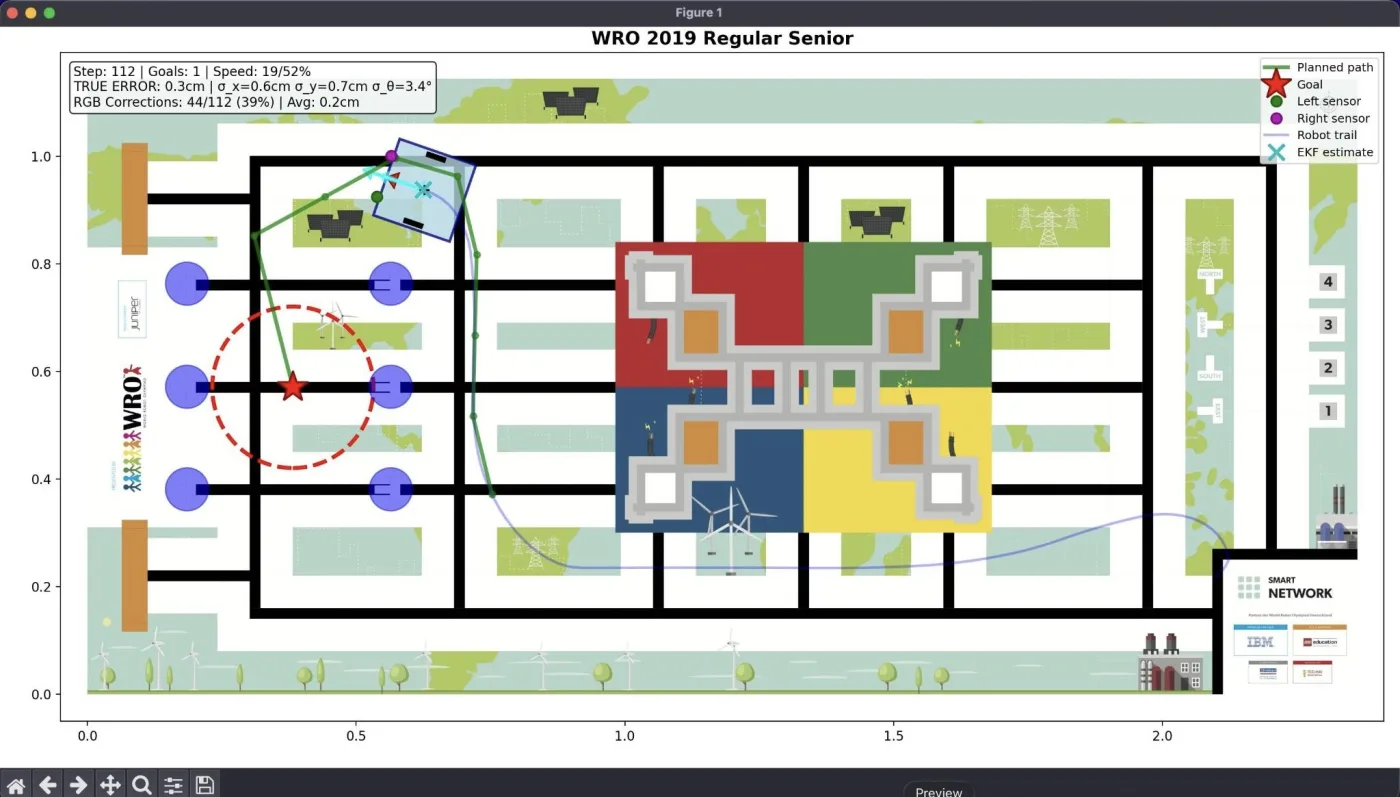

Simulation environment showing robot navigating the WRO 2019 field with trajectory visualization

I was sitting in my Introduction to Robotics lecture when it hit me. We were covering Extended Kalman Filters for state estimation, and suddenly I was thinking about a Lego robot I'd built in high school. The WRO 2019 Senior competition. The one where we placed in the top 10 in Germany but didn't quite make the podium.

Six years ago, I solved that challenge with dead reckoning. Drive forward for exactly 20 encoder rotations, turn 90 degrees, align by pressing against the wall. No probability, no sensor fusion, no real understanding of uncertainty. Just count wheel rotations and hope for the best.

Now I had six years of education behind me. A bachelor's in Mechanical Engineering from TUM. A master's in Robotics and Autonomous Systems at Boston University. I'd just spent weeks learning about probabilistic robotics, trajectory optimization, model predictive control. And I couldn't stop thinking: how would I solve that problem today?

Here's what we did back then:

That's a practice run from 2019. We scored 200 points in 1 minute 38 seconds, which put us in the top 10 in Germany. The task was to scan colored markers, determine a delivery order, and navigate to specific zones to place objects. All autonomous. All with Lego Mindstorms.

Our approach was simple but brittle. We used encoder counts to measure distance traveled, color sensors to detect markers, and a clever trick: we'd deliberately drive into walls to reset our position. The wall acted as a physical reference point. Press against it, zero the error, continue.

It worked surprisingly well at home. We spent weeks optimizing each trajectory, manually tuning every single movement. Turn for exactly this many encoder counts. Drive forward for exactly that long. Align against this wall. It was tedious, but we got really good at it.

Then we got to the competition. Different venue. Different surface. The field was made of wood, but the wood at the competition had slightly different friction than what we'd practiced on. Our carefully tuned encoder counts were suddenly wrong. The robot would turn a bit too much, miss the alignment, and once you're off by even a small amount with dead reckoning, there's no recovery. The error just accumulates.

We had one run fail because of exactly that. A tiny difference in surface friction threw off our turning calibration, and the whole run collapsed. It was frustrating because we knew our approach was good, we'd just gotten unlucky with environmental variations we couldn't test for.

So sitting in that robotics lecture in November 2025, I started thinking. What if I rebuilt that challenge, but with everything I'd learned since? What if instead of encoder counts, I used probabilistic localization? What if instead of hardcoded trajectories, I used optimal control?

I spent about a week on it. I didn't have access to the original Lego parts anymore, so this had to be a simulation. But I wanted it to be realistic. I found the actual field PDF from 2019 and converted it to a pixel-perfect numpy array. 6695 by 3240 pixels at 0.35 millimeters per pixel. The simulation could sample the exact color at any position on the field, just like the real color sensors would.

Then I rebuilt the robot model. Not with Lego, but with proper dynamics. Differential drive kinematics. Realistic motor noise models based on what I remembered from competition. Encoder errors. Color sensor noise. Everything that made the real robot imperfect.

The architecture this time was completely different. I implemented an Extended Kalman Filter for localization. Instead of trusting encoder counts blindly, the EKF fuses encoder odometry with continuous RGB measurements from the field. It builds a probabilistic estimate of where the robot actually is, accounting for uncertainty in both the motion and the sensors.

For control, I used Model Predictive Control instead of hardcoded trajectories. MPC looks ahead, optimizes a sequence of control commands over a preview horizon, then executes the first command and re-plans. It's adaptive. If the robot drifts off course, MPC automatically corrects because it's constantly re-optimizing based on the current state estimate.

The interesting observation is that this takes longer upfront. I spent days tuning the MPC cost weights, adjusting the EKF noise parameters, debugging why the localization would diverge in certain conditions. It's not done yet. The EKF still isn't fully stable, and I'm still making the system more robust.

But here's the thing: in 2019, I spent weeks manually optimizing every single trajectory. Turn here, drive there, align like this. Hundreds of individual parameters, all tuned by trial and error. With the new approach, the upfront work is higher, but once the framework is solid, I shouldn't need to manually tune individual paths anymore. The planner generates optimal trajectories automatically. The controller follows them adaptively. The localizer handles uncertainty.

It's a fundamentally different philosophy. Instead of manually compensating for every source of error, you build a system that's robust to error by design. Instead of hardcoding responses to specific situations, you use optimization and feedback to handle situations you didn't explicitly plan for.

I'm applying everything I learned in my classes. Dynamics modeling. Probabilistic state estimation. Optimal control theory. It's one thing to solve problems on homework assignments. It's completely different to apply those techniques to a real problem you tried to solve years ago with simpler tools.

The project is on hold now. I don't have time to keep working on it with everything else going on. But I want to finish it eventually. The goal is to get the simulation running reliably, prove that the modern approach actually works better, then rebuild the whole thing in Lego again. Take it full circle. See if it really is faster and more robust than what we did in 2019.

I think it will be. But I won't know for sure until I build it, run it on the actual field, and time it. That's the part I'm looking forward to. Closing the loop between theory and practice. Between the high school version of me who just wanted to make the robot work, and the current version who understands why probabilistic methods matter.

For now, it sits as unfinished code. A simulation that mostly works, with an EKF that needs more tuning and an MPC that needs more testing. Though honestly, I'll probably finish it. I still have that competitive drive from 2019. I want to see if I can beat our old time.