Building a Personal Learning Companion

Lumen: An AI learning companion that adapts to how you actually learn, not how ChatGPT thinks you should.

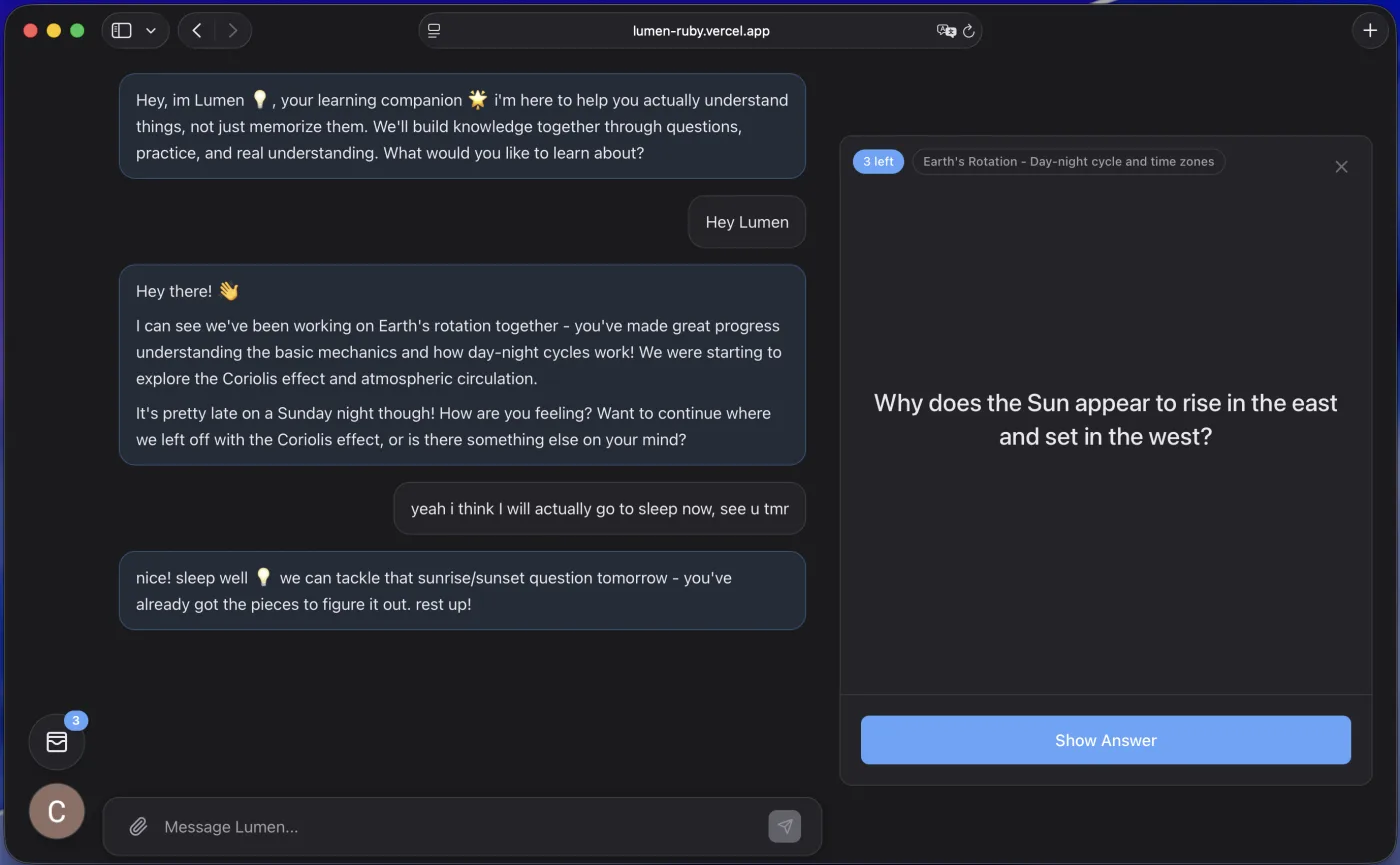

Lumen interface showing chat and learning tools

Starting my master's program, I found myself sitting in classrooms again. The pace was either too fast or too slow, never quite right. When it was too slow, I'd get restless. When it was too fast, I'd fall behind. But I didn't want to hold up the class by asking the professor to slow down or speed up based on what I personally needed.

So I'd open Claude during breaks to fill in the gaps. "Explain gradient descent again, but from this specific angle." The problem was, every time I opened a new conversation, I had to re-explain my background. What I already knew. What I was confused about. Where I was coming from. It got annoying fast.

Then I'd get generic answers. Textbook explanations that assumed nothing about me specifically. No consideration for how I learn best, what analogies would click for my brain, what I'd studied before. Just... answers. Correct, but impersonal. Like asking a very knowledgeable stranger for help every single time.

I wanted something that knew me. That remembered our last conversation. That understood I learn better with visual examples than long paragraphs. That wouldn't just give me the answer to a problem but would guide me to discover it myself. Something that felt less like a search engine and more like a patient tutor who actually cared about my understanding.

So in October 2025, I started building Lumen.

The vision was straightforward: an AI learning companion that builds a persistent understanding of who you are as a learner. It would remember your knowledge gaps, your learning style, your interests. It would use spaced repetition to help concepts stick. It would show you graphs and diagrams instead of just text when that made sense. And critically, it wouldn't just hand you answers. It would ask questions that led you to figure things out yourself.

The technical architecture wasn't particularly novel. Next.js frontend, Supabase for the database, Anthropic's Claude API for the AI. What made it different was the persistence layer. I built a memory system where Claude could create and edit markdown files about you. Your learning profile. Your interests. Your preferred style. These files became part of the context for every conversation, so Lumen would actually remember who you were.

I also implemented a learning plan system where you could create structured curricula for yourself, track progress through concepts, and generate flashcards for spaced repetition. The idea was that learning isn't just conversations, it's systematic practice over time. Build the cards during your chat sessions, then review them when they're due based on the spaced repetition algorithm.

But the real work was in the prompting. I spent hours refining how Lumen should teach. How to make it ask questions instead of explaining. How to make it patient when you're confused. How to make it celebrate progress without being condescending. This is where I think most companies building AI products don't spend enough time. They focus on features and infrastructure, but the quality of the interaction lives entirely in those prompts.

I'm convinced that prompt engineering is an underrated craft. It's not just about getting the AI to do what you want, it's about shaping an entire interaction style. How does it respond when someone's frustrated? When they're making progress? When they're stuck? A few words changed in the system prompt can completely alter the feeling of using the product. I spent more time on those prompts than on most of the features.

The technical challenge that surprised me wasn't the AI integration. That part was actually straightforward once I understood the Anthropic API. The hard part was organizing the application architecture as it grew from a simple prototype into something with real features. Suddenly I had learning plans, flashcards, file uploads, a memory system, practice sessions, visualization tools. Data was flowing through the app in ways I hadn't anticipated.

Keeping a high-level view of everything while implementing features became the real challenge. Where should this state live? Which component should handle this interaction? How do these pieces fit together? The architecture questions were harder than the individual feature implementations. I'd think I had a clear mental model of the app, then add a new feature and realize my model was incomplete.

In early November, I set myself a deadline. I wanted people to actually use this thing, not just hear me talk about it. So I sent it to a few friends and asked them to try it. Their feedback was valuable in a way I didn't expect.

They got confused about what Lumen was supposed to be. Was it for homework help? Was it a study app? Was it ChatGPT with extra features? I'd been so deep in my own head about what I was building that I'd lost perspective on how someone else would see it. The interface made sense to me because I'd built it. To them, it wasn't obvious what they were supposed to do or why this was better than just using ChatGPT.

That moment taught me something important about building products. You can't stay in your own head. You need critical feedback from people who aren't invested in your vision, who don't know what you're trying to achieve, who will just tell you when something doesn't make sense. Getting outside of my own thinking was uncomfortable but necessary.

By mid-November, I had to put Lumen on hold. My master's coursework was demanding more time than I'd anticipated, and I couldn't split focus effectively. The project sits unfinished now, functional but not polished. I still use it personally when studying, which tells me there's something valuable there. But it's far from being ready for other people.

There's also the obvious question: why build this when ChatGPT exists? When Claude and Anthropic already have excellent AI products for learning? When I'm essentially competing with companies that have massive teams and resources?

The honest answer is that I wasn't trying to compete. I was trying to build something that worked better for me personally, and in doing so, learn how to build complex applications. And I learned a lot. About organizing application architecture. About the craft of prompt engineering. About the importance of getting real user feedback early. About what it takes to go from a prototype that works for you to something that makes sense to someone else.

I learned that building complex apps requires architectural discipline from the start. That you can't just add features and hope the structure holds together. That you need to think about data flow and state management before you're drowning in tangled code. These are lessons I probably could have learned from a tutorial, but actually feeling the pain of a messy architecture made them stick.

Will I finish Lumen? Maybe. There's still a part of me that wants to see it become something polished, something other people would actually use. But even if I don't, working on it taught me things I wouldn't have learned any other way. About building products, about technical architecture, about the gap between what makes sense to you and what makes sense to users.

One thing I know for certain: this won't be the last AI agent I build.